You think you know how to roll out models to the edge until your first pilot stalls on flaky networks, opaque driver errors, and devices that drift out of spec. From our experience in the startup ecosystem, the biggest time savers are choosing platforms that handle int8 quantization for TensorFlow Lite, Kubernetes node autonomy when links drop, and MQTT based device state sync, all before your team writes custom glue. Most teams discover deployment bottlenecks during field trials, not from glossy diagrams. The goal here is to shortcut that pain with real trade offs and pricing signals, backed by third party sources like IDC's latest outlook on edge spend and objective reviews.

Global spending on edge computing is forecast to reach about 297 billion dollars in 2026 and grow to roughly 380 billion dollars by 2028, per IDC's spending guide coverage in Computer Weekly, Telecompaper, and others, with AI inference a core driver of this growth (Computer Weekly, Telecompaper). You will learn where each platform fits, how they deploy, what they cost, and the gotchas reviewers and maintainers call out.

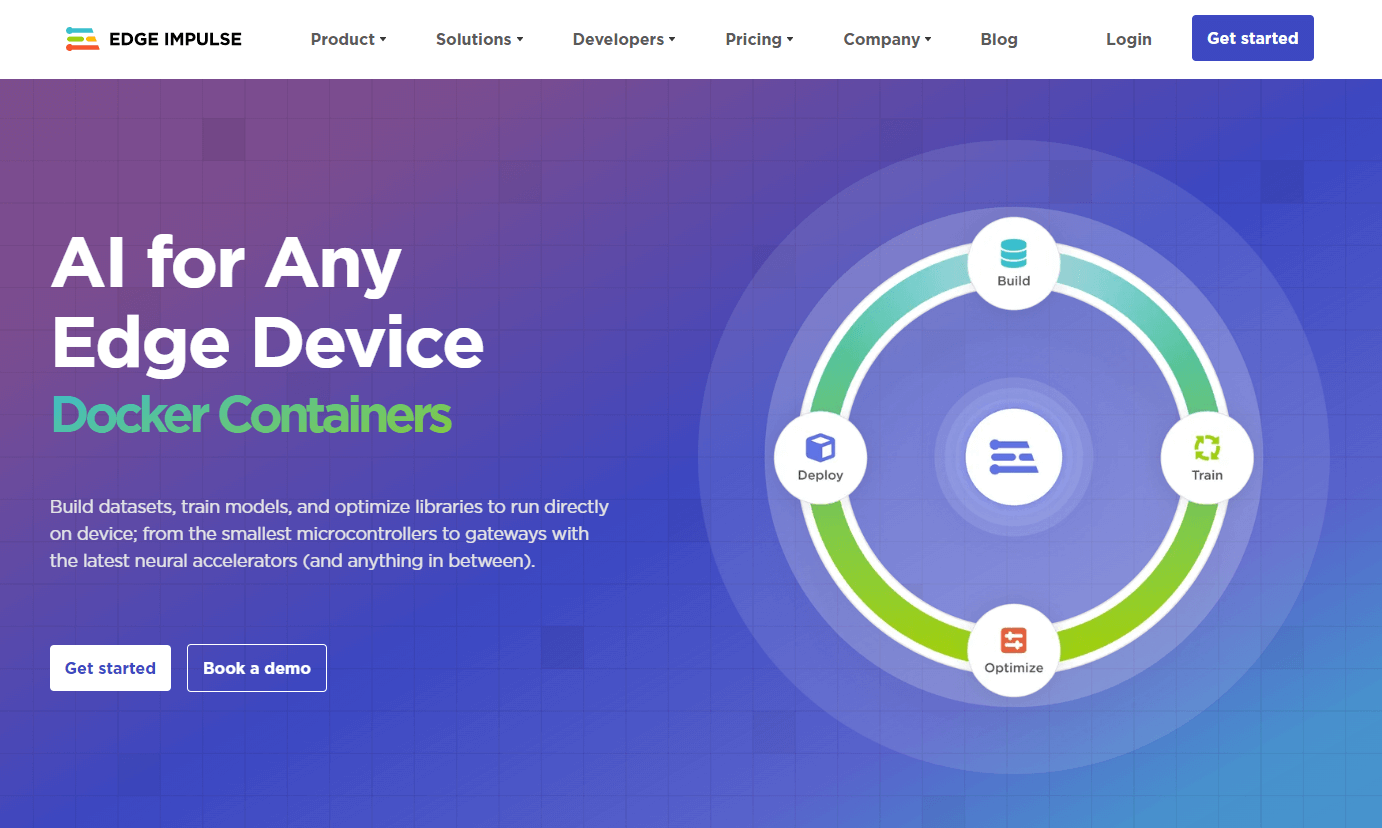

Edge Impulse

A development platform for collecting sensor or vision data, training compact models, and deploying them onto microcontrollers, NPUs, and Linux edge devices. According to vendor documentation, it standardizes the pipeline from dataset curation to on device inference and monitoring.

Best for: Teams that need a single workflow from data collection to deployment across diverse embedded hardware.

Key Features:

- End to end workflow for data acquisition, feature extraction, model training, optimization, and deployment, confirmed by analyst coverage of Qualcomm's acquisition highlighting this pipeline (Forbes analysis).

- Broad hardware coverage at the edge, referenced in acquisition reports and partner announcements that cite MCU to NPU targets (Fierce Electronics news).

- Visual, low code model building that accelerates first deployments, noted across independent user reviews (G2 reviews).

Why we like it: After helping startups scale PoCs to hundreds of devices, a consistent pipeline matters more than squeezing the last percent of accuracy. Edge Impulse shortens the path from raw sensor streams to something that runs on tiny MCUs, then gives you a predictable export to production silicon.

Notable Limitations:

- Advanced customization depth is limited compared with hand rolled ML, per multiple third party user reviews.

- Some reviewers note data import and scaling quirks that require workarounds.

- Corporate change risk, since Edge Impulse was acquired by Qualcomm in March 2025, which may shift roadmap or licensing focus (Forbes analysis).

Pricing: Pricing not publicly available on trusted third party listings, and G2 lists no published tiers. Per vendor pricing page, there is a free developer plan and a custom enterprise plan. Verify current terms with sales before committing.

Google Coral (Edge TPU)

An edge inference hardware family that accelerates quantized TensorFlow Lite models with a dedicated Edge TPU. Common form factors include USB, M.2, PCIe, and developer boards used in gateways and cameras.

Best for: Vision and audio inference at low power on constrained devices where int8 TensorFlow Lite is acceptable.

Key Features:

- 4 TOPS peak performance at about 2 TOPS per watt for int8 models, documented by independent reseller specs for modules and boards (SparkFun spec, module pricing, SparkFun Dev Board Mini).

- Runs quantized TensorFlow Lite models with very high throughput on MobileNet class networks, verified across multiple product listings and datasheets from reputable distributors.

- Available in USB and SBC form factors, with real retail pricing references from established electronics sellers (Adafruit Dev Board).

Why we like it: Working across different tech companies, our team has seen Coral reduce CPU load by orders of magnitude for classic detection pipelines, which simplifies thermal envelopes and BOM for fanless devices.

Notable Limitations:

- Framework narrowness - best results are with quantized TensorFlow Lite models rather than diverse runtimes, as reflected in distributor spec sheets.

- Availability and price volatility, with wide swings across resellers and regions that complicate large buys.

- Community reports of driver and kernel friction on certain PCIe and Windows setups, which can add integration time for PC based deployments (Home Assistant and Frigate community threads).

Pricing: Real world retail varies by form factor and reseller. Examples at the time of writing, subject to change: Dev Board about 175 dollars at a major US distributor, Dev Board Mini about 110 dollars, surface mount accelerator module about 22.95 dollars per unit in volume.

KubeEdge

An open source CNCF graduated project that extends Kubernetes orchestration to edge nodes, enabling edge autonomy during cloud disconnects and device management alongside containers. It is widely used to run containers near data sources.

Best for: Organizations standardizing on Kubernetes that need offline tolerant orchestration and device twin management at the edge.

Key Features:

- Kubernetes native orchestration that continues running at the edge when connectivity drops, then re syncs state on return, captured by independent technical press and CNCF communications (The New Stack explainer, CNCF graduation announcement).

- DeviceTwin and EventBus components for MQTT based device state and twin synchronization across cloud and edge, documented in community resources and references.

- Project maturity, with CNCF graduation in October 2024 and ongoing release cadence, signaling active governance and adoption.

Why we like it: After helping startups scale, our team has learned that putting Kubernetes muscle at the edge reduces one off tooling and gives SRE teams familiar primitives, from deployments and CRDs to metrics they already know.

Notable Limitations:

- Operational complexity and K8s expertise required remain non trivial, a theme noted by independent explainers and edge architecture write ups.

- Windows edge node support exists but is newer compared with Linux, which can affect driver and plugin availability in mixed OS fleets, based on historical release notes and coverage over time.

- You will still own integrations with your CI, secrets, and observability stack, which is powerful but adds responsibility.

Pricing: Open source with no license fee. Budget for platform engineering and infrastructure. No vendor pricing applies.

Edge AI Orchestration & Deployment Tools Comparison: Quick Overview

| Tool | Best For | Pricing Model | Highlights |

|---|---|---|---|

| Edge Impulse | Fast path from data to embedded deployment across many chips | Subscription, enterprise custom (free developer plan available) | End to end ML pipeline with export to MCUs, NPUs, Linux targets |

| Google Coral (Edge TPU) | Low power TFLite int8 inference on gateways and SBCs | Hardware, one time purchase | 4 TOPS peak, strong MobileNet throughput, small thermal envelope |

| KubeEdge | K8s native orchestration with offline edge autonomy | Open source (free) | Kubernetes API at the edge, device twin and MQTT integration |

Edge AI Orchestration & Deployment Platform Comparison: Key Features at a Glance

| Tool | Data to Model Pipeline | Hardware Acceleration | Fleet Orchestration |

|---|---|---|---|

| Edge Impulse | Integrated dataset, training, optimization, deployment | Targets MCUs to NPUs per exports | Basic device management, not a full K8s orchestrator |

| Google Coral | External to platform | Edge TPU, int8 TFLite at 4 TOPS | None, depends on your OS or container stack |

| KubeEdge | External to platform | Neutral, runs containers on CPU or attached accelerators | Full K8s style deployments with edge autonomy |

Edge AI Orchestration & Deployment Options

| Tool | Cloud API / On-Premise | Air-Gapped | Integration Complexity |

|---|---|---|---|

| Edge Impulse | Yes SaaS workflow; enterprise on-prem options may exist, verify with sales | Possible via exported binaries and SDKs for devices | Low to medium, depends on hardware variety |

| Google Coral | No cloud API, hardware only; on-premise yes | Yes | Low to medium, drivers and kernel versions can add friction for PCIe and Windows |

| KubeEdge | K8s API compatible; on-premise yes | Yes | Medium to high, brings full K8s operations to the edge |

Edge AI Orchestration & Deployment Strategic Decision Framework

| Critical Question | Why It Matters | What to Evaluate |

|---|---|---|

| Do you need offline autonomy for hours or days | Networks at the edge are unreliable | KubeEdge's behavior during disconnect and resync, local state stores. Red flag: cloud only agents that crash or stall on link loss |

| Are your models TFLite int8 friendly | Coral excels with quantized TFLite | Model portability tests and quantization impact on accuracy. Red flag: expecting PyTorch or FP32 models to run on Coral without major changes |

| How many hardware SKUs must you support | Fleet diversity adds time and cost | Edge Impulse export coverage and device SDK maturity. Red flag: one off build scripts per device with no repeatable pipeline |

| Who will operate the platform | Opex dominates TCO | K8s skills, observability, GitOps maturity. Red flag: no dedicated owner for upgrades, certs, and secrets |

Edge AI Orchestration & Deployment Solutions Comparison: Pricing & Capabilities Overview

| Organization Size | Recommended Setup | Cost Notes |

|---|---|---|

| Startup, single product | Edge Impulse for pipeline, Coral for prototype, graduate to production silicon later | Hardware is one time, platform pricing varies. Highly variable, confirm per contract and BOM |

| Mid market with plants | KubeEdge at sites, Edge Impulse or internal training pipeline, accelerators per workload | Infra plus SRE time, varies by node count. Driven by node count, support staffing, and replacement cycles |

| Enterprise, regulated | KubeEdge with strict RBAC and air gapped registries, curated accelerators | Significant platform engineering Opex, often multi year programs |

Problems & Solutions

-

Problem: Real time defect detection on a line with 10 millisecond budget and 10 watt power cap.

Solution: Deploy a quantized TensorFlow Lite model on Coral hardware to hit sub 10 millisecond inference at a few watts, as independent distributor specs show 4 TOPS and about 2 TOPS per watt with strong MobileNet throughput. -

Problem: Edge sites lose WAN for hours, yet containers must keep running and device states must not drift.

Solution: Run workloads under KubeEdge to keep Kubernetes primitives operational at the edge, then re synchronize state when links return, which independent coverage describes as a core design goal and is reinforced by CNCF graduation materials. -

Problem: Building, testing, and exporting MCU scale models across different vendors without bespoke code for each chip.

Solution: Use Edge Impulse to standardize data capture, feature extraction, training, and exports, noted by analyst breakdowns of its workflow and validated by user reviews that praise rapid deployment to edge devices.

The Bottom Line on Edge AI Orchestration & Deployment

If your priority is a repeatable pipeline from data to embedded binaries, Edge Impulse saves calendar time, with the caveat that you accept its guardrails and track post acquisition roadmap signals. If you only need efficient inference and can commit to quantized TFLite, Coral still delivers great perf per watt for classic models, though availability and driver fit must be checked against your OS and kernel. If you want Kubernetes native control that survives intermittent links, KubeEdge is the mature open source choice, backed by CNCF graduation and a growing ecosystem. For budgeting context, the broader edge market is expanding on double digit growth, which justifies investing in tooling that cuts deployment time and operational risk.