Using HTTrack, a free and open-source web crawler, users can save copies of websites they visit online on their own computers.

HTTrack also includes Proxy support, which can be used to increase speed. HTTrack can be used for either personal (capture) or commercial (online web mirror) purposes as a command-line software or through a shell. In light of this, those with greater experience in the programming field should give HTTrack a try.

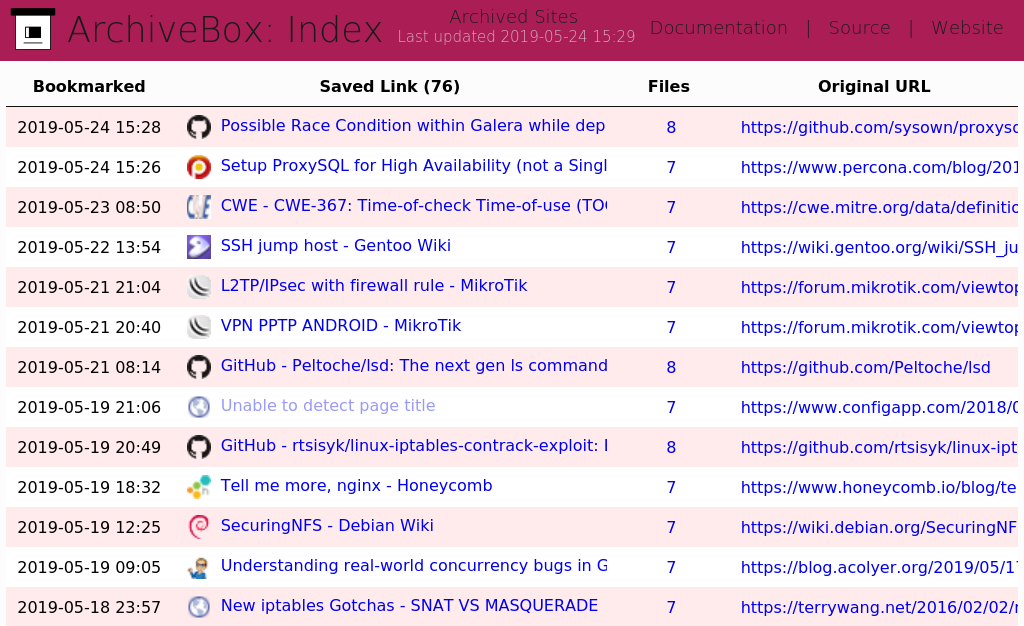

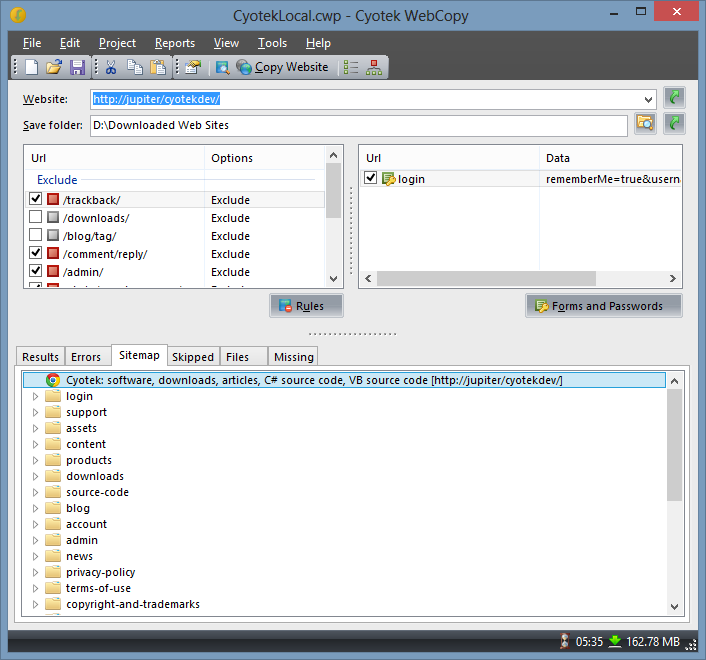

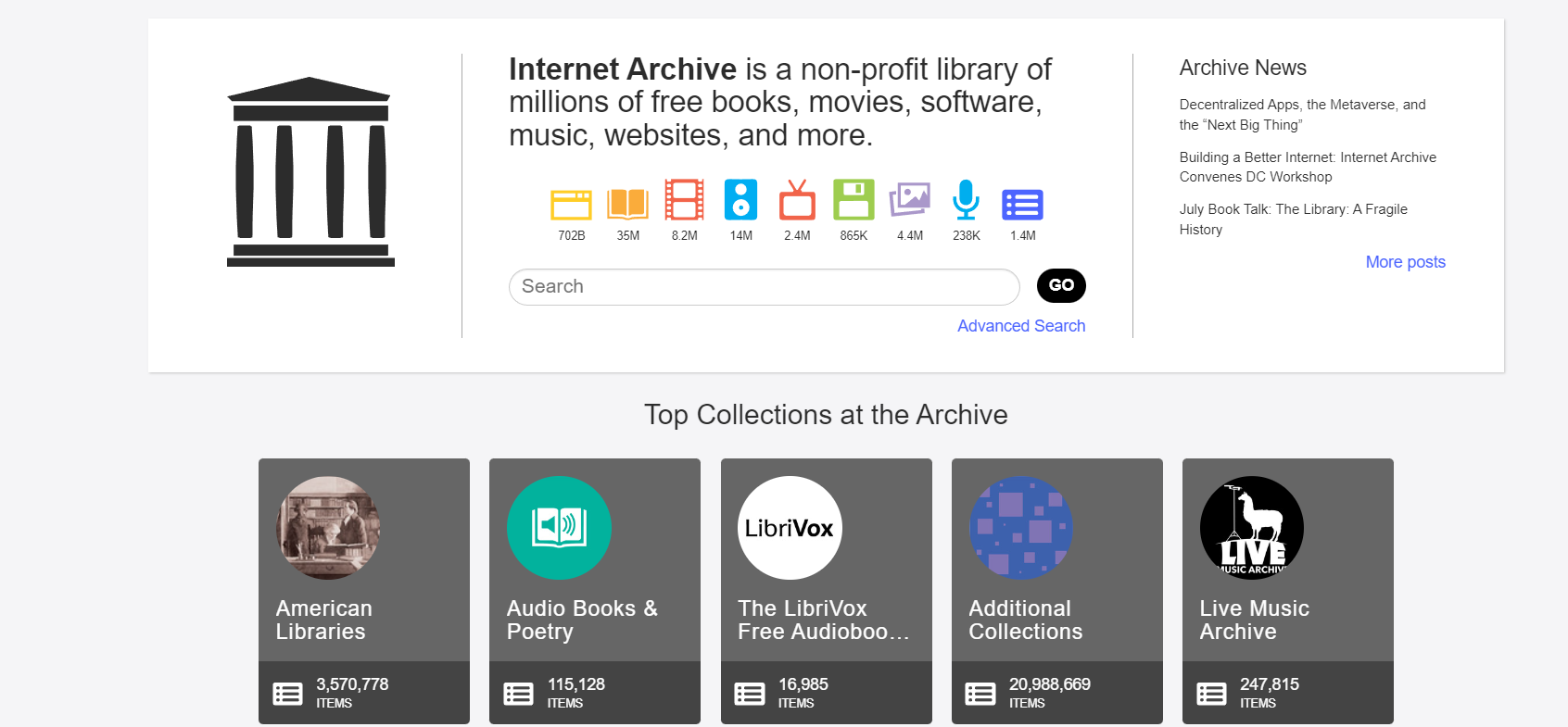

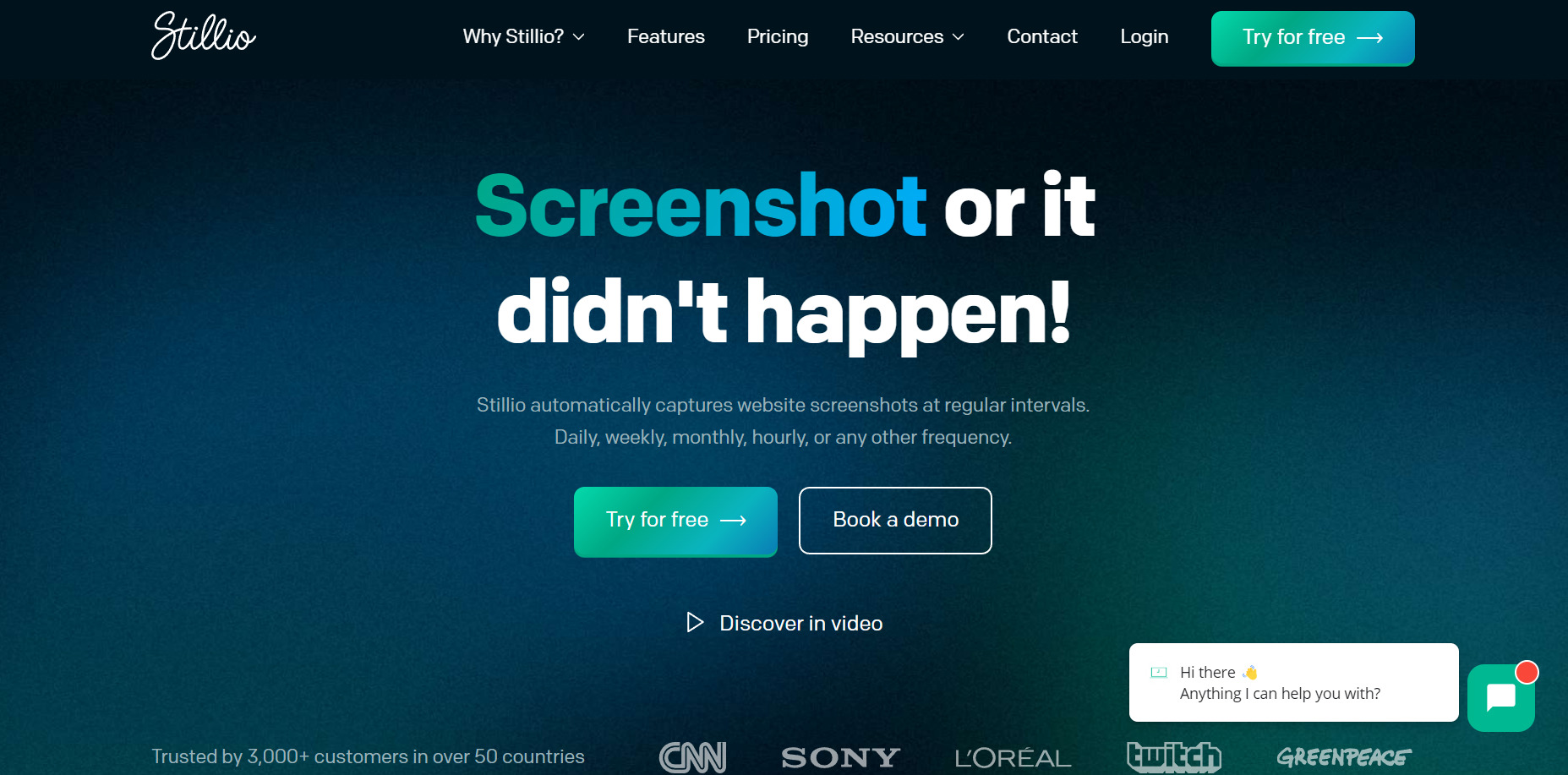

There are a bunch of decent tools out there that offer the same array of services as HTTrack. And it can sure get confusing to choose the best from the lot. Luckily, we've got you covered with our curated lists of alternative tools to suit your unique work needs, complete with features and pricing.