You think you know your sandbox posture is solid until a generated script pulls a rogue dependency and your runner phones home. Working across different tech companies, we have seen that the biggest mistakes happen when teams first let agents execute code against live credentials. The fastest fix comes from predictable isolation, for example microVM snapshots that cut cold starts for safer burst workloads, Kubernetes gVisor to intercept syscalls before they reach the host, and tight egress policies that allow only approved domains. AWS documented how snapshotting reduced cold start pain in Lambda's Firecracker era, which speaks directly to agent sandboxes built on similar primitives, and Google's gVisor and NetworkPolicy docs show how to add defense in depth at the runtime and network layers (AWS compute blog, gVisor docs, GKE NetworkPolicy).

The business case is not theory. The average breach cost reached 4.44 million dollars in 2025, per IBM's Cost of a Data Breach analysis. After removing tools with unclear roadmaps or thin isolation, we narrowed our list to four AI code sandbox platforms that consistently delivered safe execution, usable SDKs, and clear pricing. In the next sections you will learn when to pick each, how they contain untrusted code, what to watch out for, and how to avoid surprise bills.

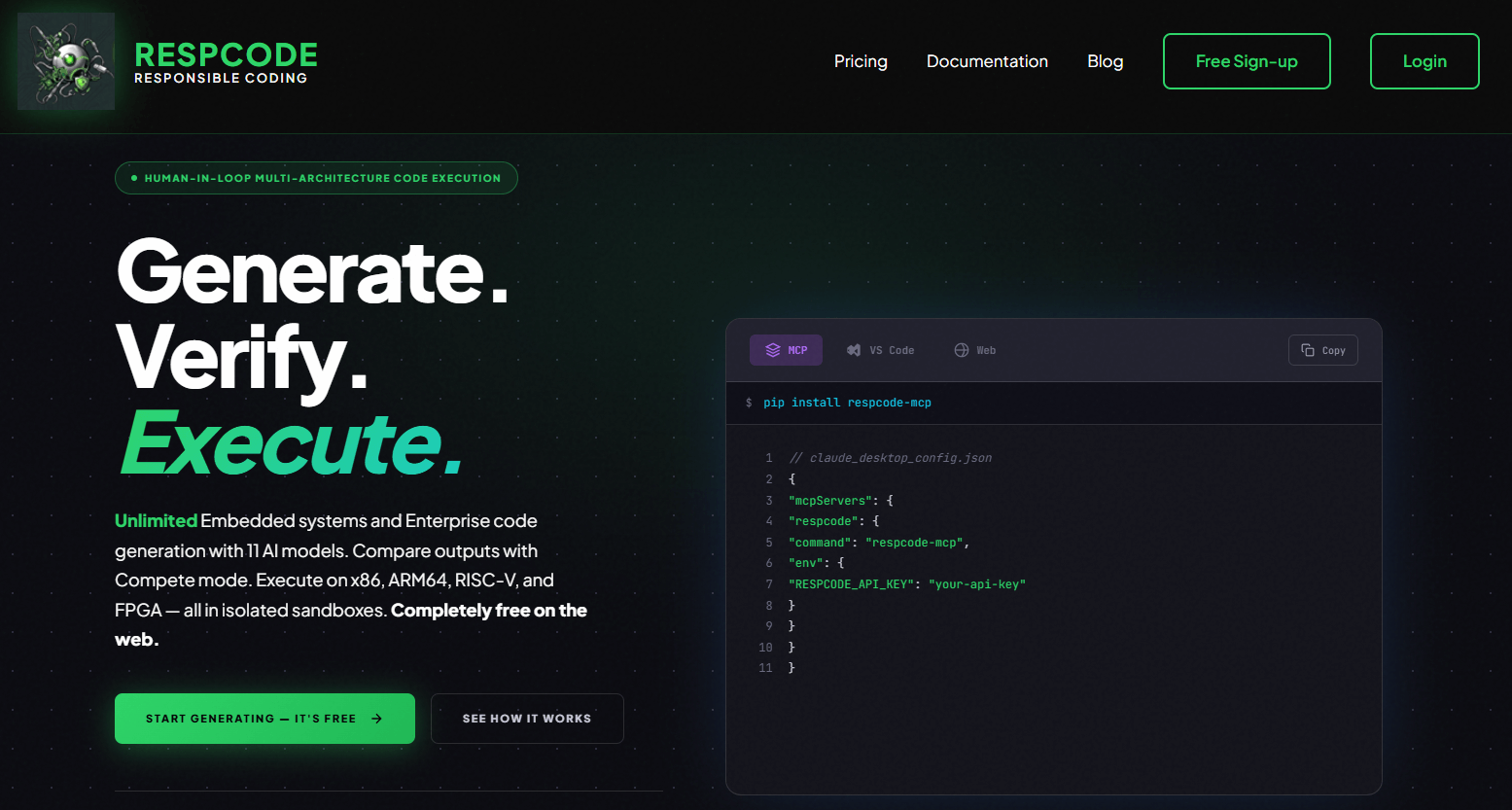

RespCode

Multi model code generation with parallel orchestration and real execution in isolated sandboxes. Per vendor documentation, it targets x86_64, ARM64, RISC V, and Verilog or FPGA simulation with streaming logs and verification.

- Best for: Embedded and systems teams that want cross architecture code generation plus immediate compile or run validation.

- Key Features: Multi model "Compete, Collab, Consensus" orchestration, sandbox execution with compile or run logs, x86, ARM64, and RISC V targets, FPGA simulation with planned hardware synthesis, IDE extensions and API.

- Why we like it: The cross architecture focus shortens hardware bring up loops and flags portability bugs before they escape to devices.

- Notable Limitations: Third party reviews are limited as of early 2026, so enterprise references are scarce. FPGA hardware access is listed as coming soon, so verify timelines before planning.

- Pricing: According to vendor pricing as of early 2026, web usage is free, API is per generation, and a desktop IDE plan and per synthesis FPGA fees are offered. Pricing not independently verified by a marketplace, so confirm with sales.

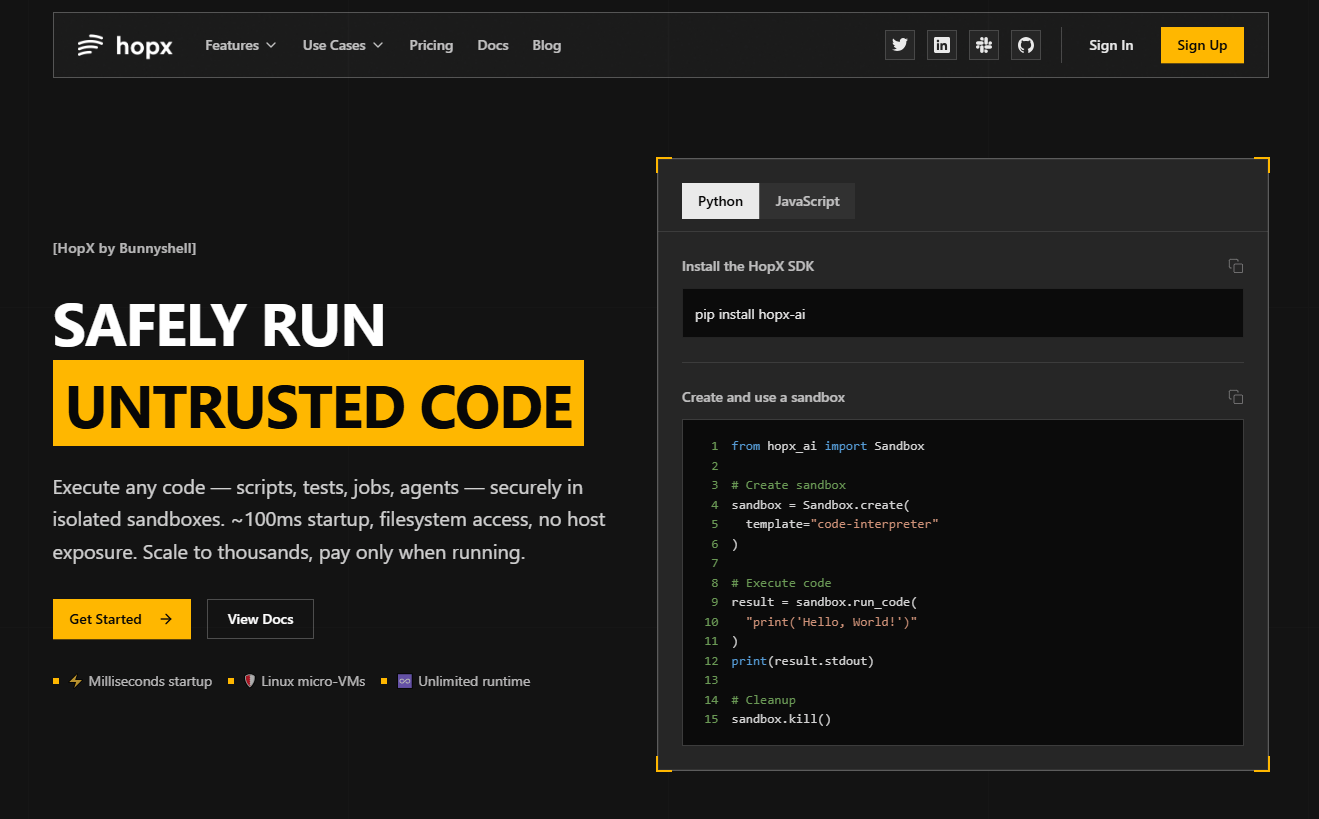

HopX

SDK driven microVM sandboxes to run untrusted, AI generated, or user submitted code with streaming outputs and process control. Per vendor documentation, Firecracker style microVMs deliver near instant startup and no host exposure.

- Best for: Product teams embedding code execution in apps, CI owners who want per PR isolation, and agent builders needing long running jobs.

- Key Features: ~100 ms cold start from snapshots, VM level isolation, multi language execution, streaming stdout or stderr, filesystem and process management APIs.

- Why we like it: The SDK keeps integration time low while the isolation model maps to real world risk from untrusted code paths.

- Notable Limitations: Pricing and SLAs are vendor published with few third party reviews as of early 2026. On premises or air gapped options are not publicly documented, so regulated buyers should confirm deployment models.

- Pricing: Vendor lists per second rates for vCPU and memory with free credits for trials as of early 2026. Pricing not publicly verified by a marketplace, contact HopX for a custom quote.

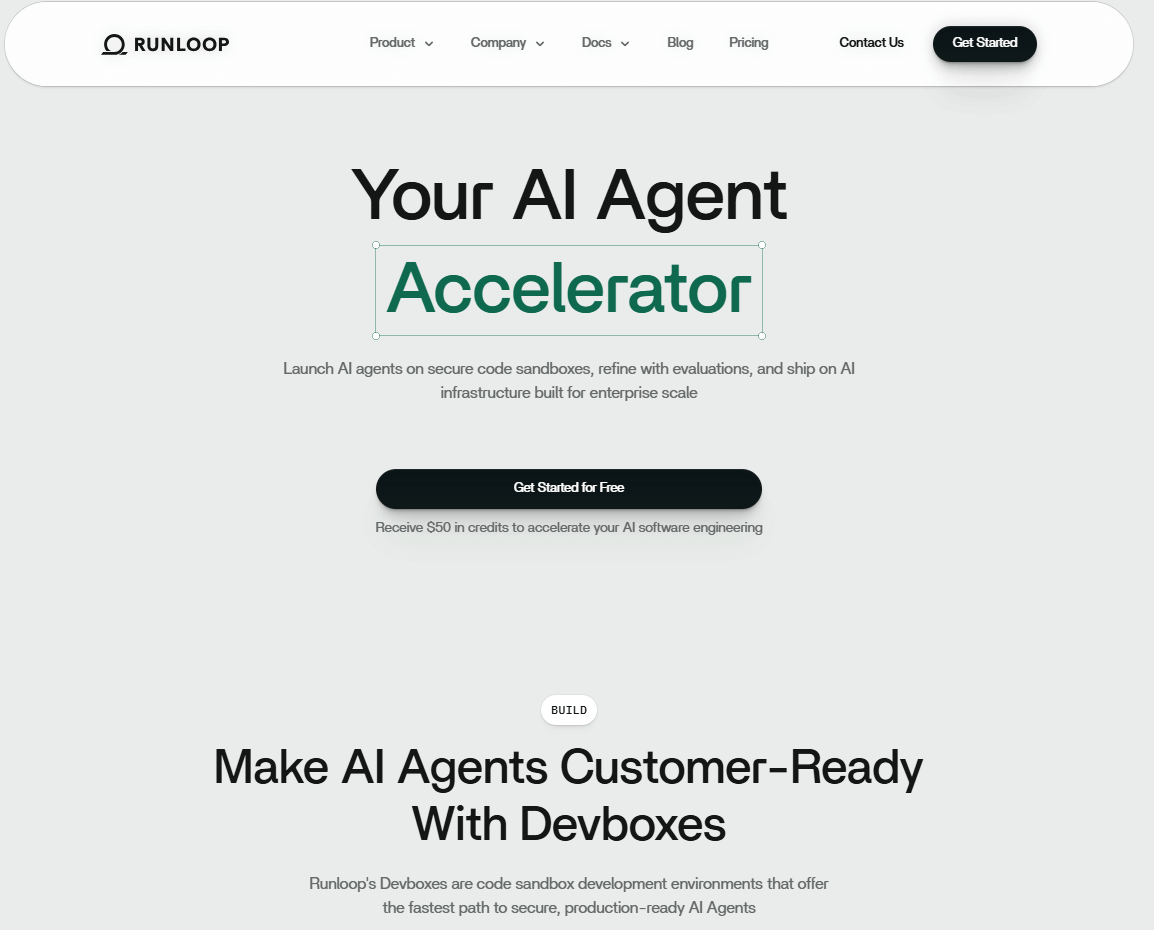

Runloop

Enterprise grade "Devboxes," long lived sandboxed workstations for AI agents with VM isolation, observability, and benchmarks. Public announcements highlight VPC deployment options for enterprises.

- Best for: Security conscious engineering orgs that need controlled, auditable agent environments with snapshots, blueprints, and network policies.

- Key Features: VM based devboxes, stateful or stateless sessions, snapshots and resumes, customizable images or sizes, network egress policies, SDK and dashboard.

- Why we like it: VPC deployment and lifecycle controls address real enterprise constraints, from data residency to incident response workflows.

- Notable Limitations: Independent reviews are limited, so request references and a proof of value.

- Pricing: Usage based with free trial credits ($50 on signup per vendor site). Contact Runloop for enterprise and volume quotes.

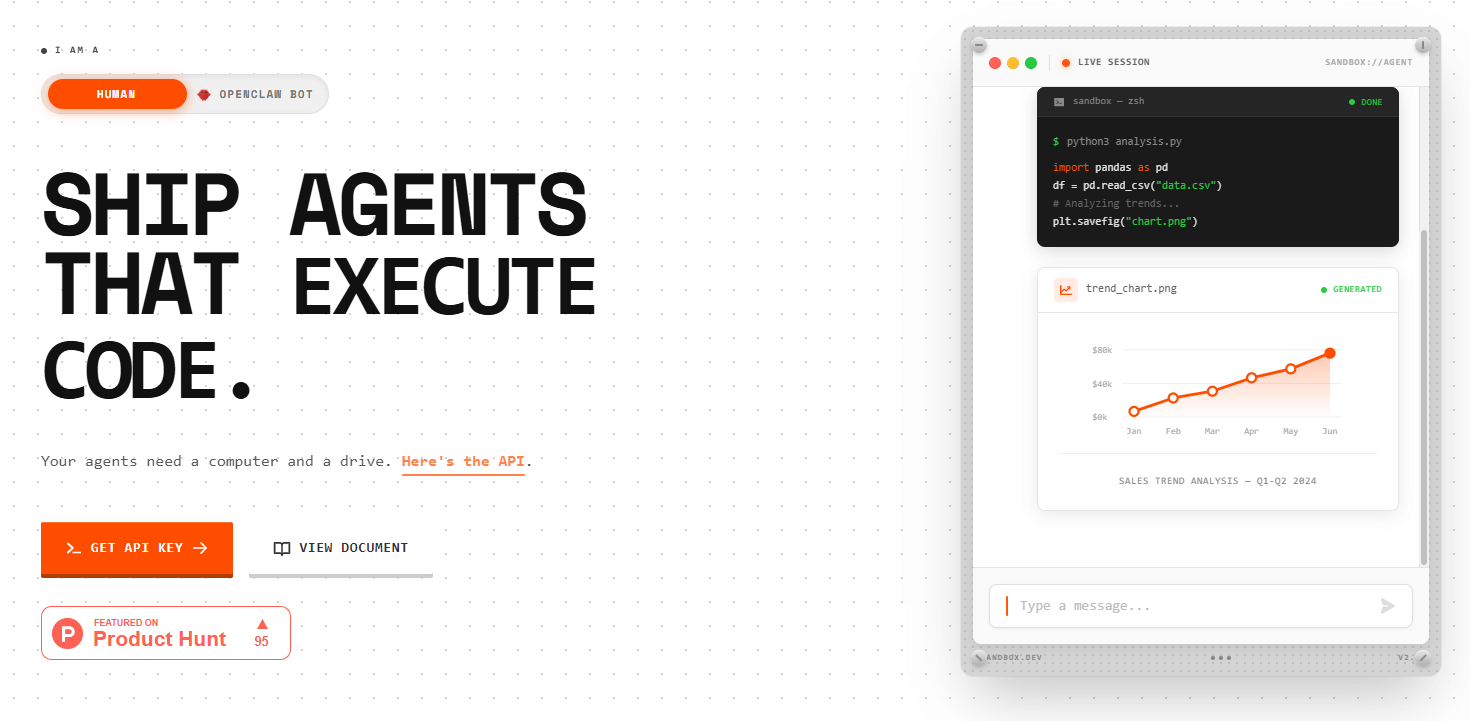

Agent Sandbox

API first sandbox runtime so agents can execute code, manage artifacts, and pass files in or out without touching production systems. Public pages describe per second compute and per MB storage billing.

- Best for: Teams adding a "code interpreter" style feature to products and data teams who need a low friction way to run user code safely.

- Key Features: Python or shell execution in isolated workspaces, manifest based dependency install, artifact upload or download, SDKs to integrate with agent frameworks.

- Why we like it: Clear mental model, quick starts, and a metered model that fits spiky evaluation workloads.

- Notable Limitations: Pricing sensitivity for long running jobs due to per second metering, so add quotas and alerts. Third party reviews exist but are still limited.

- Pricing: Third party summaries report 0.00025 dollars per second for compute and 0.0005 dollars per MB for storage with free trial credits (Stork.AI overview). Confirm current rates before committing.

AI Code Sandbox Tools Comparison: Quick Overview

| Tool | Best For | Pricing Model | Highlights |

|---|---|---|---|

| RespCode | Embedded and systems teams needing cross arch verification | Web free, API per generation, IDE subscription, FPGA per synthesis per vendor info | Multi model orchestration, cross arch sandboxes, FPGA simulation |

| HopX | App embedded code execution, CI isolation, long jobs | Usage based per second for vCPU and memory per vendor info | Firecracker style microVMs, streaming output, process control |

| Runloop | Enterprise agent workstations with audit needs | Usage based with trial credits, enterprise quotes available | Devboxes with snapshots, blueprints, egress policies, VPC deployment covered in a press release (PR Newswire) |

| Agent Sandbox | Product teams offering safe code interpreter features | Usage based per second compute and per MB storage, cited by third party | File in or out, manifest based installs, simple API |

AI Code Sandbox Platform Comparison: Key Features at a Glance

| Tool | Isolation Model | Code Execution UX | Observability |

|---|---|---|---|

| RespCode | Vendor states sandboxed execution | Web IDE, VS Code, API | Build or run logs and streaming |

| HopX | Vendor states microVM isolation | SDK with streaming stdout or stderr | Metrics, logs, and process APIs |

| Runloop | VM based devboxes | SDK, CLI, dashboard | Snapshots, monitoring, network policies |

| Agent Sandbox | Vendor states isolated workspace | API for code, sessions, artifacts | Session logs and file artifacts |

AI Code Sandbox Deployment Options

| Tool | Cloud API | On Premise or VPC | Integration Complexity |

|---|---|---|---|

| RespCode | Yes | Not publicly documented | Low, web IDE and extensions |

| HopX | Yes | Not publicly documented | Low, SDK focused |

| Runloop | Yes | VPC deployment option publicly announced | Moderate, enterprise rollout |

| Agent Sandbox | Yes | Not publicly documented | Low, API first |

AI Code Sandbox Strategic Decision Framework

| Critical Question | Why It Matters | What to Evaluate |

|---|---|---|

| What isolation boundary protects the host from untrusted code | VM or gVisor class boundaries reduce host kernel exposure | MicroVMs like Firecracker and gVisor class sandboxes, plus SELinux or AppArmor where relevant |

| How fast can a clean runtime start | Agents benefit from sub second cold starts for interactive tasks | Snapshot based startup and pre warmed pools |

| Can you restrict egress by FQDN or CIDR | Prevents data exfiltration and supply chain abuse | Kubernetes NetworkPolicy and FQDN egress policies |

| Is there a VPC or private deployment path | Regulated workloads often need in tenant deployment | VPC, private link, or on premises options |

| How predictable is pricing under load | Per second metering can spike during long jobs | Quotas, alerts, and usage dashboards |

AI Code Sandbox Solutions Comparison: Pricing and Capabilities Overview

| Organization Size | Recommended Setup | Cost Notes |

|---|---|---|

| Individual developer or small startup | Agent Sandbox or HopX for pay as you go experiments, RespCode web for free cross arch tests | Varies by seconds of compute and storage, verify current vendor rates or review site summaries. Set budgets and alerts to cap spend |

| Mid size team | Mix of HopX for embedded code execution and RespCode API for CI checks, add quotas | Usage based, depends on concurrency and job length. Negotiate volume discounts |

| Enterprise | Runloop devboxes in VPC for long lived agent workstations, pair with RespCode for cross arch verification | Not publicly listed for Runloop, contact for quote. Contract dependent |

Problems & Solutions

-

Problem - Running untrusted AI generated code in production risks host compromise and data leakage.

Solution - Use VM or gVisor class sandboxes so system calls are intercepted or isolated from the host. Google documents how gVisor provides a userspace kernel that separates workloads, which is a strong baseline for running untrusted code. Kubernetes network policies restrict egress to only what is needed, including FQDN based rules to avoid data exfiltration. MicroVM snapshot techniques further improve startup performance for safe, bursty workloads. HopX applies this pattern for SDK driven execution, and Agent Sandbox focuses the same idea on "code interpreter" use cases. -

Problem - Long lived agent workflows need stable, observable environments with repeatable state.

Solution - Runloop's devboxes concept targets exactly this with VPC deployment announced publicly and controls for snapshots, blueprints, and network egress that match enterprise needs. This reduces the operational risk compared to ad hoc runners and gives security teams a clear point to monitor. -

Problem - Cross architecture validation is expensive and slow without the right tooling.

Solution - RespCode aligns to this gap with multi model generation and sandbox execution across x86, ARM64, and RISC V, which mirrors industry momentum behind heterogeneous compute, including recent RISC V advances reported in the press (Tom's Hardware coverage of RISC V momentum). This helps firmware and edge teams catch portability issues early. -

Problem - Costs can spike with per second metering when agents stall or loop.

Solution - Favor platforms that expose clear metrics, quotas, and alerts. Marketplace guidance for AI agents emphasizes usage based metering with transparent metrics, which you can mirror in your cost controls regardless of vendor (Google Cloud Marketplace pricing models). In practice, set per run budget caps and kill switches in your integration.

Bottom Line

Most teams discover sandbox gaps only after an expensive incident, not from a tidy readiness review. The fastest, least risky path is to separate concerns - pick a sandbox model that matches your threat profile and performance goals, then layer network controls, observability, and cost caps. The four platforms above reflect the tradeoffs, from SDK simplicity to enterprise VPC deployment. If you need proof for leadership, the cost of a breach dropped to 4.44 million dollars on average in 2025 - still a massive number that makes small investments in isolation and guardrails look cheap. For emerging alternatives and context, even mainstream runtimes are launching agent focused sandboxes, as covered in recent industry news (InfoWorld on Deno Sandbox). Choose based on isolation boundary, startup latency, deployment model, and pricing transparency, and you will save both time and money.